Years back, a typical QA cycle consisted of manually written test cases, step-by-step executed regression tests, and hours or days of effort to log bugs. Even the industries report that fixing a bug after release can cost up to 30x more than fixing it during development.

Then who removed this friction from testing? Artificial Intelligence or AI. It brought our focus from “Did we test everything?” to “Are we testing the right things?”

In this blog, we are going to review how Artificial Intelligence (AI) in software testing is redefining the Quality Assurance (QA) landscape by automating repetitive test workflows, enhancing test coverage, and identifying potential defects proactively prior to their impact on final production.

Limitations of Manual QA Testing

Manual QA testing has several drawbacks as per today’s software quality parameters. Modern AI-driven testing solutions have powered up the testing teams by rapidly uncovering issues during the software development lifecycle (SDLC), increasing operational efficiency, and significantly accelerating software delivery timelines.

Let’s check out how traditional manual QA approaches struggle to keep pace with the growing demands of the data-driven, complex, and rapidly evolving modern applications.

1# Time-Consuming Testing

Manual testing requires you to repeatedly execute the same test cases across multiple devices, environments, and builds. For example, you may take days to manually perform a regression test on an e-commerce platform after every single update, following validating user logins, checkout workflows, and payment gateways. Ultimately, the release cycle delays.

2# Limited Test Coverage

Professional human testers can hardly execute thousands of input combinations and existence of end number of user journeys while working on banking or healthcare applications. The reason is the impracticality of testing every edge case manually. This results in transaction failures or untested boundary conditions.

3# Higher Risk of Human Error

Manual testing professionals are required to have dedicated precision, consistency, and attention to detail to perform repetitive tasks like verifying form validations or UI elements across multiple screens. An ounce of fatigue or oversight may lead a tester to inadvertently miss a minor UI misalignment or an intermittent bug that appears only in certain specific conditions that could allow defects to slip into production.

4# Difficulty Handling Large Data Sets

Modern applications, particularly in domains like fintech, analytics, or e-commerce, generate and process massive amounts of data such as transaction records, user behavior logs, or real-time analytics. Manually validating such data makes it difficult for testers to analyze and test all scenarios effectively.

AI underscores these challenges and drives organizations toward AI-powered quality assurance solutions to give a boost to human capabilities while addressing the limitations of manual testing.

AI Test Case Generation

WeTest reports that AI-driven test automation increases test coverage by 35% with, slashing manual workloads by 40%.

AI-powered systems can analyze requirements documents, learn from past test cases, track user behavior patterns, and generate meaningful test scenarios automatically.

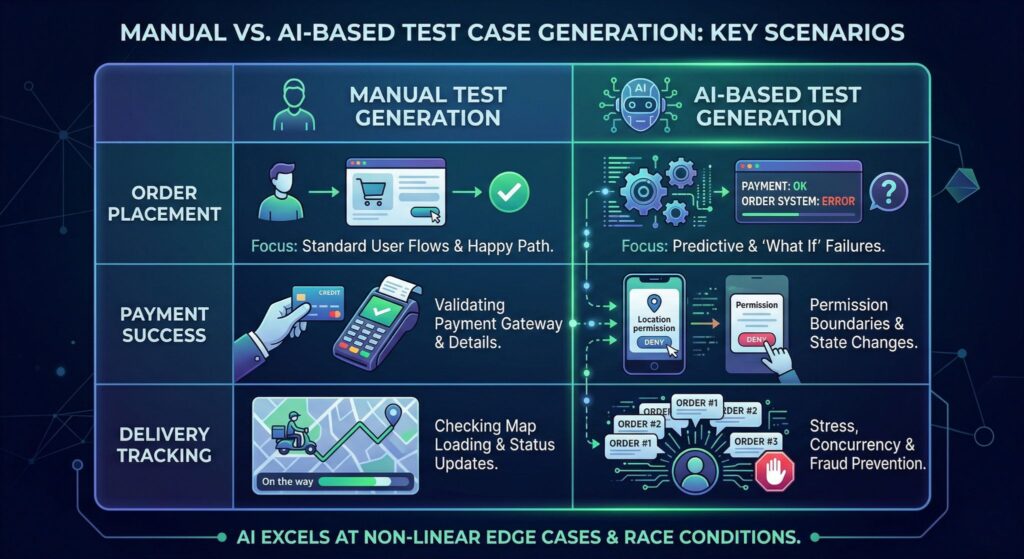

Imagine you are testing a food delivery app, and here’s what you can observe differently in both kinds of testing processes:

| Manual Test Case Generation | AI-Based Test Case Generation |

| Order placement | What happens if payment succeeds but the order fails? |

| Payment success | What if location access is denied mid-order? |

| Delivery tracking | What if the same user places multiple rapid orders? |

Benefits of AI Test Case Generation

- Automated test case creation from requirements reduces dependency on manual scripting. For example, generating login and authentication test cases directly from a Jira ticket.

- Improves test coverage by generating multiple input combinations and user flows. For example, testing various payment methods, currencies, and failure scenarios in an e-commerce checkout system.

- AI test case generation reduces manual effort for QA teams by automating repetitive test design tasks such as form validation or API response checks.

- Identifies edge cases and hidden scenarios that are often missed by manual review. For example, boundary value failures in financial calculations or simultaneous user requests can cause race conditions.

Artificial intelligence testing tools can continuously learn from previous test results, improving the quality of generated test cases over time.

AI in Test Case Generation:

Application Requirements

↓

AI Test Case Generator

↓

Automated Test Cases

AI in Regression Testing

Regression testing ensures that new code changes do not negatively affect existing functionality. However, regression test suites can become extremely large and difficult to maintain.

IJISAE, in one of their journals, affirms that traditional regression suites may take 8 hours to execute, whereas it can take this down to 4 hours only.

AI analyzes recent code changes, impacted modules, and historical bug patterns and then selects only the most relevant test cases to execute.

For example, when a developer updates the checkout logic, the AI will skip unrelated modules like user profile or search and prioritize payment tests, leading to regression cycles reduced from hours to minutes, along with faster releases without compromising quality.

Benefits of AI in Regression Testing

- AI prioritizes high-risk test cases such as payment, authentication, or checkout modules, after recent commits in tools like Jenkins or GitHub, to focus on the most failure-prone areas first.

- AI reduces regression testing time by intelligently selecting and executing only impacted test cases and skipping unaffected modules in a microservices architecture.

- AI improves testing efficiency by running parallel tests and adapting based on past outcomes, like Testim or Mabl, to speed up UI and functional testing.

- AI minimizes redundant test execution by eliminating duplicate or low-value test cases like static content pages, and effectively uses resources.

AI-driven regression testing helps teams maintain software stability while supporting faster release cycles. For example, validating core banking transactions or SaaS login flows before release, which ultimately enables faster and more reliable deployment cycles

AI for Bug Prediction

AI for bug prediction goes from reactive to proactive QA by analyzing past defect data, code complexity, and developer activity patterns. A study on deep learning or advanced software development lifecycle models assures 90% to 92% accuracy and precision.

For example, while checking out a FinTech app, AI may flag currency conversion logic and payment gateway integration as high-risk zones based on past failures.

Benefits of AI Bug Prediction

- AI identifies high-risk code areas and highlights unstable modules like payment APIs or authentication layers in repositories managed via GitHub to find out the bugs.

- By prioritizing modification of microservices or recently deployed features, AI helps teams focus testing efforts effectively using insights from tools like SonarQube.

- AI detects memory leaks or performance bottlenecks in staging environments that reduce production defects and minimizes costly post-production issues before release.

- AI improves software reliability by identifying patterns leading to crashes in mobile apps tested via Firebase Test Lab. This ensures consistent application performance and stability.

From using analytics from IBM Watson to anticipate failure scenarios, AI-driven models forecast defects based on past trends and allows the teams to resolve issues before they impact end users.

AI Bug Prediction Model:

Historical Bug Data

↓

AI Analysis

↓

High-Risk Code Areas

↓

Focused Testing

Popular AI Testing Tools (That Teams Actually Use)

Here we have compiled a list of some of the popular AI-powered testing tools that are used by modern QA teams, along with what makes them stand out:

- Testim: One of the top-rated tools, best known for its speed and ease of use. It leverages artificial intelligence for self-healing locators and stable automation testing. This makes it strongly chosen by the teams seeking quick UI testing without requiring much maintenance overhead.

- Functionize: Functionize uses Natural Language Processing (NLP) and Machine Learning (ML) for test case creation and maintenance, utilizing strong self-healing capabilities that automatically collaborate with the UI changes, making it an ideal choice for fast-evolving applications.

- Applitools: Applitools leads in visual artificial intelligence testing and works with visual comparison algorithms to identify UI inconsistencies across browsers and devices, which is essential for pixel-perfect validation in design-sensitive applications.

- Mabl: Mabl comes with built-in analytics, seamless CI/CD integration, and auto-healing tests, making automations well-suited for agile teams practicing continuous testing.

Risks of Over-Automation

While Artificial Intelligence (AI) significantly enhances testing efficiency, an over-reliance on automation can introduce potential risks that may impact overall software quality and reliability.

- Lack of Human Judgment: AI tools may miss usability issues or business logic errors that human testers can detect, like poor user experience in checkouts, despite passing automated tests through Testim.

- High Initial Setup Costs: AI testing tools may require significant investment in upfront training. For example, Functionize can cost thousands annually, depending on scale.

- Dependency on Data Quality: Poor or defective data of limited quality in systems like Jira can result in incorrect test predictions and prioritizations.

- Maintenance Complexity: Over-automation can sometimes lead to creating complex test ecosystems. You need continuous monitoring and updates while managing large automated suites across CI/CD pipelines in Jenkins.

- False Positives & Over-Reliance: False alerts with AI tools lead to debugging efforts unnecessarily, like visual mismatching reported by Applitools due to minor UI changes.

AI systems rely heavily on data, and inaccurate data can lead to incorrect predictions. Therefore, organizations must balance automation with human expertise. Always remember, AI can inform you what might break, but not always about what feels wrong.

Hybrid AI + Manual Testing Strategy

The most effective QA approach combines a blend of AI automation and human expertise. It is where speed, scalability, and data-processing capabilities of AI get paired with contextual understanding, intuition, and domain expertise of human testing professionals.

According to industry reports by organizations like Capgemini and World Quality Report, organizations adopting a combination of hybrid testing and AI-driven approaches experience up to 30% to 50% faster test execution, with improved defect detection by up to 20% to 30%.

Best Practices for Hybrid Testing:

- AI is used for repetitive and data-heavy tasks like large dataset validations, automating regression suites, and pattern recognition, which reduces the execution time by up to 40–60%.

- To perform manual testing for exploratory and usability scenarios, focusing on real-world user behavior, edge-case exploration, and UX validation.

- Combine AI insights with human decision-making to highlight high-risk areas based on historical data. However, human intervention is essential for validating business impact.

- Continuously monitor AI testing performance by regularly evaluating AI models and test outcomes to avoid drifts and false positives.

Organizations adopting a hybrid QA strategy benefit from improved software reliability, faster release cycles, and reduced testing costs. A combination of automation and human intelligence brings a balance of speed and quality, making the applications not just functionally correct but also intuitive and user-friendly.

Key Takeaways

- AI Accelerates Testing Cycles

- Improves Test Coverage and Accuracy

- Enables Predictive Quality Assurance

- Reduces Manual Effort and Costs

- Enhances Regression Testing Efficiency

- Supports Continuous Testing in CI/CD Pipelines

- Requires a Balanced (Hybrid) Approach

Hybrid QA Testing Model:

QA Process

/ \

AI Testing Manual Testing

\ /

Improved Software Quality

Conclusion

AI is neither optional nor mandatory, but better practiced in the modern and fast-paced environment. When Agile and DevOps teams adopt AI-powered testing approaches, it helps reduce regression cycles by up to 40% to 50%, enabling faster releases following automation and smarter test prioritization.

In trending scenarios, AI is used by the teams to detect high-risk code areas, auto-generate test cases, and integrate tests directly into CI/CD pipelines for regular feedback.

Hybrid is the most practical and effective approach that enables teams to fast ship results without compromising quality, making AI a key driving agent for next-generation QA strategies.